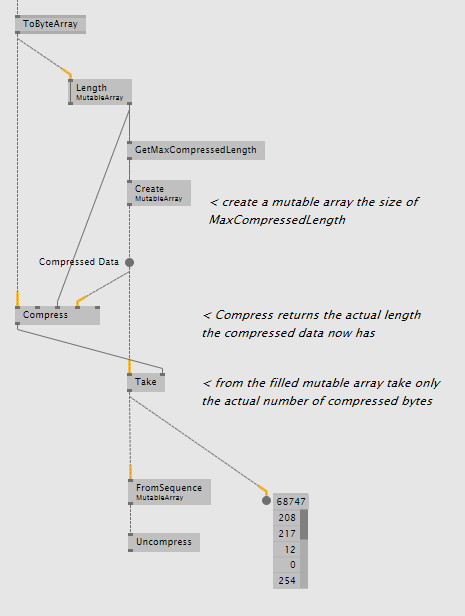

snappy_unittest -norun_microbenchmarks -lzo testdata/* They probably won’t apply to things like numeric or text data.~/snappy-read-only $. These are the scores for a data which consist of 700kB rows, each containing a binary image data. Snappy-java with its Apache license is a clear winner. But it doesn’t matter because of our inability to redistribute it. I am suspicious about something in LZO scores since I was expecting much better performance. Data size was 403MB which mean around 40% compression ratio and we read our data at 6.37ms per item which indicate 25% increase in IO performance.Ĭonclusion AlgorithmĜompression Ratio IO performance increase The same compress-each-item-seperately mechanism applies here with press and Snappy.uncompress. Last one was recently announced snappy of Google. Reading performance increased 21% with 6.63ms passed for one item. At this test our data size was 400MB meaning a compression ratio of 40%. It works the same way as it does too, like using LZFEncoder.encode just before writing to HBase and using code just after reading. Third test was a LZF implementation, ning-compress following Ferdy Galema’s response to previous Deflater tip.

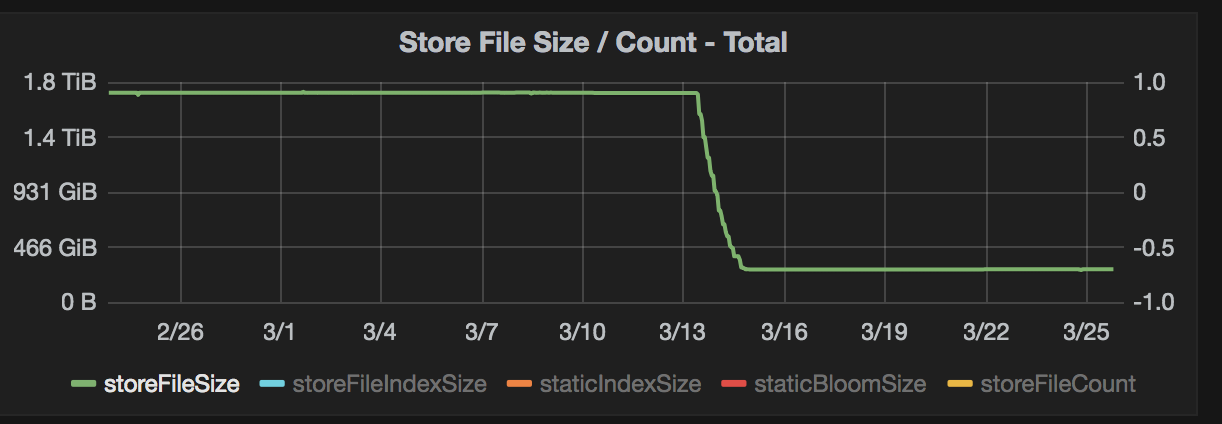

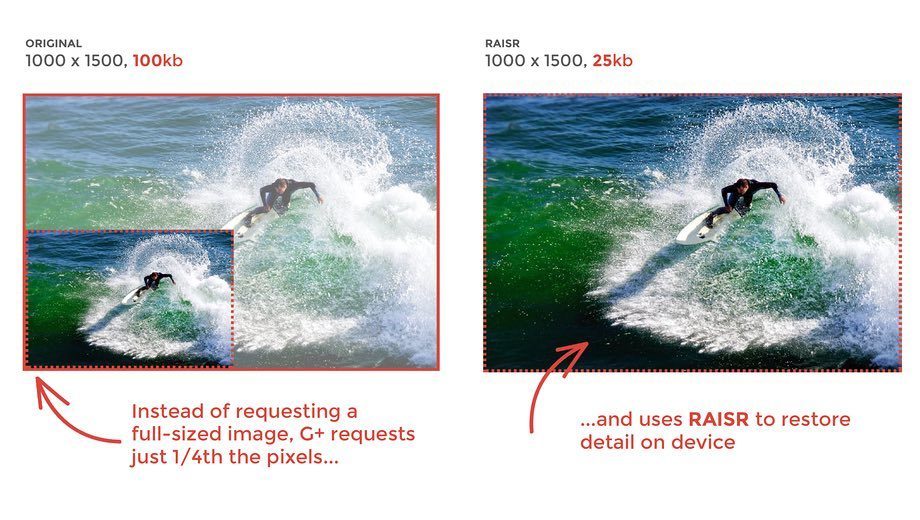

Anyways, our 1000 item set resulted in 398MB meaning a 41% compression ratio and we’ve seen a 5% increase in reading performance too: we read one item in 8.1ms compared to 8.41ms uncompressed. LZO on the other hand will compress the whole file in HDFS, so in a regular setup it is expected to have better compression ratios since there will be similarities among the rows and it will exploit those. All other methods i talk about here compress data per item basis. On the other hand this complexity is sure to have a benefit. You can check here and here for instructions on how to set it up. It is somewhat harder to use in hadoop (at least the recommended way), but i’ve managed. Although we are unable to re-distribute it because of licensing issues, we still felt the urge test and see what we are missing. But our reading performance suffered 16%, increasing the time per row to 9.73ms. The total size of our 1000 items decreased to 346MB meaning a compression ratio of 48%. Using no compression, our test data of 1000 items takes up 670MB and our MapReduce tasks are able to read a cell in 8.41ms.įirst algorithm we tried was ZLIB, or /Inflater following this post by It simply involves using Deflater just before “Put"ting data into HBase, and using Inflater just after reading data from "Result"s. Each cell contains an image, more specifically a subset of an image so it is binary and supposedly not as compressable as some log file. Our data is like 700kB per row and for testing purposes we have 100k rows. Of course these are general talks and to see real performance changes and compression ratios, one have to try those algorithms with his/her own data. Because of that hadoop applications prefer LZO, a real-time fast compression library, to ZLIB variants. Large capacity disks are far cheaper than fast storage solutions (think SSDs) so it is better for a compression algorithm being faster than being able to give higher compression ratios. If the infrastructure starves on disk capacity but has no performance problems it may be logical to use an algorithm that give huge compression ratios, losing some time on CPU but that’s usually not the case. It is simply trading IO load for CPU load. On the other hand we now need to uncompress that data so we use some CPU cycles. By using some sort of compression we reduce the size of our data achieving faster reads. Hadoop workloads i know about are generally data-intensive, thus making the data reads a bottleneck in overall application performance. Considering hadoop clusters almost always work on commodity machines, the reason for that is simple to explain: disks are slow. HBase documentation and several posts in hbase-user mailing list tell that using some form of compression for storing data may lead to an increase in IO performance.

This post is about a recent research which tries to increase IO performance for our MapReduce jobs.

Although i am not able to discuss details further than what writes on my linkedin profile, i try to talk about general findings which may help others trying to achive similar goals. Now and then, i talk about our usage of HBase and MapReduce.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed